Ai data processing

AI data processing frameworks handle large datasets efficiently.

Distribute complex AI computations across multiple computers.

Examples:

Apache Spark – general-purpose AI and data processing.

Apache Flink and Apache Kafka – real-time AI data streaming.

Data

Collection

Collection

Data

Preparation

Preparation

Data

Input

Input

Data

Processing

Processing

Data Output &

Interpretation

Interpretation

Data

Storage

Storage

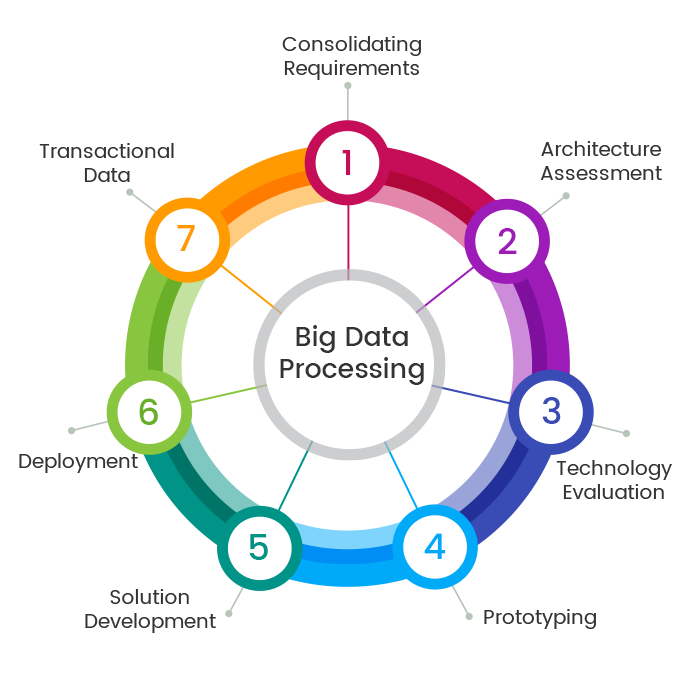

Provides guidelines, protocols, and tools for handling data.

Covers the entire process from data collection to final output.

Often documented as formal frameworks or PDFs.

Includes key stages: collection, preparation, processing, storage, and presentation.

Data Processing Frameworks in AI

Fact #1

Big Data Processing Engine

Apache Spark is a powerful distributed computing framework for large-scale data processing. It processes massive datasets across clusters, making it up to 100 times faster than traditional systems through in-memory processing. Ai data processing Spark includes MLlib for machine learning algorithms and handles both batch and streaming data. Data scientists use it for ETL operations, feature engineering, and training AI models on petabytes of data. Companies like Netflix, Uber, and Airbnb rely on Spark for their recommendation systems and analytics pipelines.

Fact #2

Real-Time Data Streaming

Apache Kafka is a distributed streaming platform that handles real-time data feeds with high throughput and low latency. It acts as a message broker, collecting data from multiple sources and distributing it to AI applications. Kafka handles millions of events per second, making it ideal for fraud detection, real-time recommendations, and monitoring systems. It uses a publish-subscribe model where multiple AI models can process the same data stream simultaneously. Companies like LinkedIn, Twitter, Spotify, and PayPal use Kafka for their AI-driven features.

Fact #3

Distributed Storage and Processing

Apache Hadoop is a framework for distributed storage and processing of large datasets across clusters. HDFS (Hadoop Distributed File System) stores data across multiple machines for fault tolerance. Hadoop's MapReduce processes massive datasets in parallel by breaking tasks into smaller chunks. While Spark is faster, Hadoop remains essential for storing huge amounts of AI training data cost-effectively. Its ecosystem includes Hive for SQL queries, Pig for scripting, and HBase for NoSQL databases. Organizations use Hadoop to store historical data and perform large-scale data transformations.

Fact #4

Python Data Manipulation

Pandas is the most popular Python library for data manipulation, providing DataFrames for organizing and transforming data. It offers methods for cleaning data, handling missing values, merging datasets, and performing aggregations. Data scientists use Pandas in the data preparation phase before training AI models. Dask extends Pandas to work with larger-than-memory datasets by processing data in parallel across multiple cores or machines. It uses the same Pandas API, making it easy to scale from small to large datasets without rewriting code.

Fact #5

Stream Processing Framework

Apache Flink is a distributed stream processing framework for real-time data analytics. Unlike batch processing, Flink treats data as continuous streams, enabling true real-time AI applications. It provides exactly-once processing semantics and low-latency processing, making it ideal for fraud detection, network monitoring, and dynamic pricing systems.Ai data processing Flink supports both stream and batch processing with the same APIs. Companies use Flink for real-time recommendation engines, anomaly detection in financial transactions, and processing IoT sensor data for predictive maintenance.

Fact #6

Distributed Computing for AI Workloads

Ray is a modern distributed computing framework specifically designed for AI and machine learning workloads. It makes it simple to parallelize Python applications and scale them across clusters. Ray provides libraries like Ray Tune for hyperparameter tuning, Ray Train for distributed training, and Ray Serve for model deployment. It handles both data processing and model training in a unified framework, automatically managing task scheduling, resource allocation, and fault tolerance. Companies like OpenAI, Uber, and Shopify use Ray to train large-scale AI models and serve predictions at scale.